onlinestudy.guru

Learn more achieve more

Copyright © 2018-19 onlinestudy.guru powered by redhunt.in

Learn more achieve more

We have unique plans for you.

Educational website – ₹2,500/-

Student management system – ₹7,000/-

Brochure – ₹5,000/-

E-commerce-₹9,000/-

Portal – ₹6,000/-

Wiki – ₹6,000/-

Social Media – ₹7,000/-

+Get 4 months technical support

free from our developer

contact us – edu@onlinestudy.guru

mdu mcom last three year question paper download 2017 complete set

mdu mcom last three year question paper download 2017 complete set

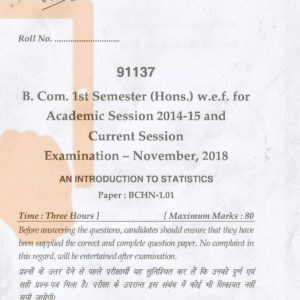

BCOM HONS 1ST SEM NOV 2018 SAMPLE PAPERS MDU

BCOM HONS 1ST SEM NOV 2018 SAMPLE PAPERS MDU

Comments